Significant updates to ChatGPT Search are coming

Since ChatGPT gained the ability to search the web in October 2023, it has worked substantially the same way… until now.

The old way:

- User asks ChatGPT a question

- ChatGPT decides whether the question needs information from the web to answer

- ChatGPT's AI model translates the question into a keyword-style search query

- ChatGPT sends the search query to Bing and gets back results (lists of pages with titles, snippets, etc.)

- ChatGPT's AI model decides which pages are relevant

- ChatGPT retrieves content from those pages using their own bot

- ChatGPT uses retrieved content(s) as context to answer the user's original question

When OpenAI rolled out SearchGPT – their 2.0 search experience – late last year, it was a major upgrade for users, including better UI for citations, local search result (map) UI, better decision making about when to search that better matched user intuitions, and a "Search Mode" toggle that always searches the web.

But aside from specific news partnerships, the fundamentals of how ChatGPT finds and accesses content on the web remained the same, and the basic info website operators and publishers needed to know has been fairly stable. Not anymore.

Here's what's changing, based on our observations of ChatGPT behaviors, telemetry about AI bot interactions across the web, and OpenAI's own announcements.

Search UI improvements coming to consumers starting with premium plans

The ChatGPT experience continues to evolve. OpenAI runs many, many experiments involving the ChatGPT UI across all customer tiers, but their general pattern in recent months has been to roll out major changes starting with the most premium plans, eventually open them to free logged-in users, and then finally to logged-out (incognito) users. For example, ChatGPT Search was made available to logged-out users only at the beginning of February 2025.

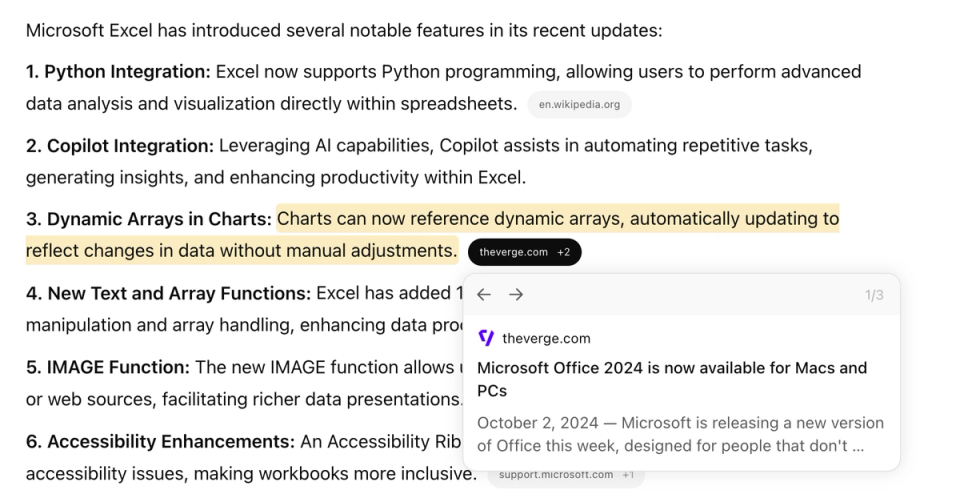

Large updates to search are now rolling out to Pro and Plus plan accounts. The new UI includes updated citation "chip" UI and clearer attribution when multiple sources are contributing to a particular part of the response.

And when users hover over search results in the "Sources" sidebar, they can now directly visualize where the source is being used. (Maybe small, but I love this feature. Kudos to the ChatGPT product team.)

Additionally, when search is being used, ChatGPT starts responding much faster than before. This isn't a coincidence – it's a result of changes to OpenAI's search infrastructure that change where search results come from and how ChatGPT interacts with content on the web.

ChatGPT brings search indexing in house; Content retrieval updates; Mixed bag for site operators

Since October 2023, ChatGPT has used Bing results for search. We identified site compatibility with Bing and the Bingbot search indexer as crucial early on – this has been a change in the SEO environment, since Google has historically completely dominated market share in traditional search.

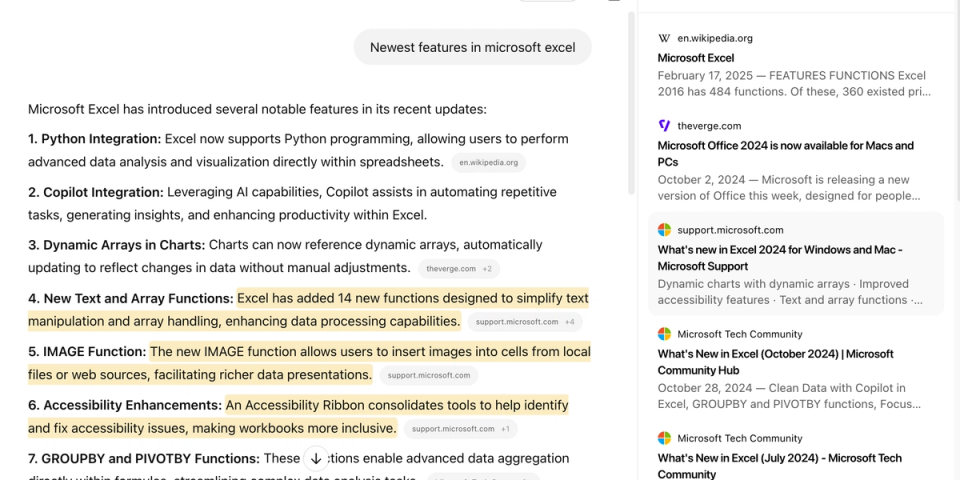

As part of the SearchGPT launch, OpenAI documented and began operating a new search indexer: OAI-SearchBot. You can see estimated traffic volumes for OAI-SearchBot below courtesy of Cloudflare Radar – it has ramped up significantly since the initial launch. But over the past six months it has been unclear how important SearchBot was to the ChatGPT Search pipeline.

As a reminder, OpenAI operates three distinct bots or "user agents" on the public internet. The other two are:

- GPTBot is OpenAI's training data crawler. Data collected by GPTBot is used for training future AI models – it doesn't directly correlate with use of ChatGPT at all, and you can safely block it without limiting your visibility in ChatGPT search results.

- ChatGPT-User is the bot ChatGPT uses to retrieve webpages that come back in search results. To date, ChatGPT has re-retrieved page content every single time it wants to cite a page; while inefficient, this gives website operators maximum control. It also means traffic levels from ChatGPT-User directly correlate with ChatGPT Search usage overall – not an inference you can make from traffic from bots like Googlebot or Bingbot.

For accounts that have the newly updated ChatGPT Search experience, we now see a very different relationship between user search activity and OpenAI bot traffic.

- First and foremost, in the new experience, ChatGPT-User no longer re-requests page content for every query. While ChatGPT does still retrieve web content in real time when needed, it now also seems to cache page content for a period of time.

As technologists, this is a change we've been expecting to see OpenAI eventually make. Retrieving content from the web is expensive – as models keep getting faster and cheaper, more and more of the cost of operating a service like ChatGPT is in the search and retrieval process, where costs are not dropping 80% per year. - OAI-Searchbot traffic is correlated with ChatGPT retrievals – but not in real time. After retrieving content we see OAI-Searchbot retrieving site robots.txt files, perhaps to make sure the content is still OK for inclusion in search.

Unlike before, ChatGPT-User itself does not retrieve robots.txt synchronously with the user query, so changes to robots rules may not immediately take effect. (This is not unusual for search services: Google for example documents an SLA of about 24 hours to notice changes to robots.txt.) - Follow up queries where content is served from the new cache seem to also trigger OAI-Searchbot to retrieve your web content. This appears to be limited to the specific resources that are showing up in ChatGPT Search and not a full site recrawl, perhaps to asynchronously check if content has changed.

The implication here is that OpenAI is refreshing its search index for certain pages more often based on what users are searching in ChatGPT – a hypothesis that many SEOs have shared with me, but that we haven't seen clear evidence for until now.

Altogether, we believe these changes signal that OpenAI is now completing their transition away from using Bing as the primary search index. ChatGPT citations and Bing result rankings might de-correlate over time. From an analytics perspective, this makes understanding ChatGPT's sources more challenging – before, if you ranked well in Bing for specific topics, your chances of being cited in ChatGPT were higher. That now becomes more "black box".

OpenAI releases Web Search tools and enters the Search Results API market, competing with Bing

Lastly, the clearest signal that change is here came in the form of one of OpenAI's product update livestreams this morning, March 11.

In short, OpenAI is now releasing the ability to have API calls to their models automatically incorporate relevant content from the web, complete with citations. This is similar to Perplexity's Sonar API. Like Sonar, the search results exposed through this API should map directly to the search results available in ChatGPT.

Also similar to Perplexity, this does not mean that the model responses developers get when using this API will completely replicate the ChatGPT experience – there continues to be a lot of proprietary engineering in the ChatGPT product around displaying specific categories of results, user personalization, additional tools, etc. The specific way the model uses the results also will vary depending on developer settings, including the system prompt.

If you'll indulge me in some Kremlinology here, it's interesting to see this product come out in this format and unit pricing. While many SEOs are familiar with third party data providers that sell Google SERP data, It is remarkably little known that Microsoft actually sells Bing search as a service directly via Azure.

While not completely apples to apples, OpenAI's search tool is priced in the same increments (1000 requests) as Bing and Perplexity and matches the Bing's "pay as you go" rate at the lowest "quality" settings ($25/1K), while the default medium-quality options are priced at a slight premium. Considering that it is quite expensive to go from raw search results to usable model context, this is a pretty good deal for agent developers. (On the other hand, Perplexity Sonar pricing is in a completely different ballpark – coming in at $5 per thousand calls.)

Takeaways

The AI assistant and search market continues to evolve at lightspeed with announcements like Grok 3, all of the Deep Researchs, Google AI Mode (which turns AI Overviews into a full conversational assistant) and more. While there are bumps in the road, AI tools continue to give consumers unprecedented power over the information environment while being easier to use… and sometimes downright fun.

For publishers, site operators, marketers and SEOs, on the other hand, this is a career-defining period of disruption (and that's on top of an unusually volatile period in traditional search ranking.) Strong fundamentals – brand reputation, high-quality websites, clear and compelling writing – are still crucial, but the environment has changed and tools and practices must change with it.

If you're looking for a partner to navigate the shift, at Scrunch we're meeting with dozens of brands every week to help them adapt and thrive as AI use becomes even more pervasive. Give us a ring if you'd like to know more.

(p.s. This isn't even covering the other two announcements in OpenAI's event today, which are also major updates for folks building AI systems. More on that later.)